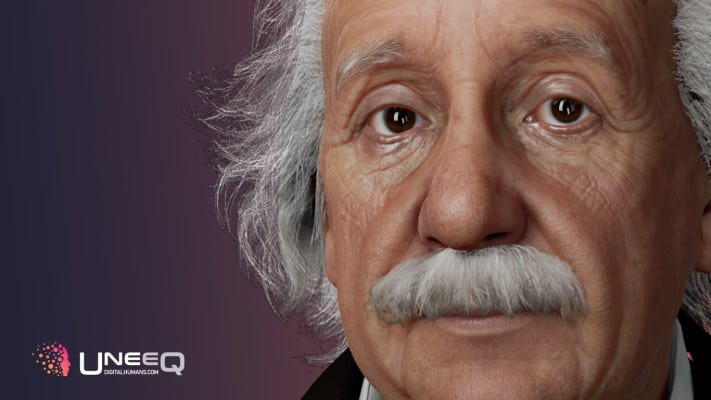

You’ll have to listen carefully for this cut of deepfakery arising out of the wacky universe of blended media: A computerized form of Albert Einstein — with an incorporated voice that has been (re)created utilizing AI voice cloning innovation drawing on sound chronicles of the popular researcher’s real voice.

The startup behind the “uncanny valley” sound deepfake of Einstein is Aflorithmic (whose seed round we canvassed back in February).

While the video motor controlling the 3D character ripping parts of this “advanced human” adaptation of Einstein is crafted by another combined media organization — UneeQ — which is facilitating the intuitive chatbot variant on its website.

Alforithmic says the “advanced Einstein” is expected as a feature for what will before long be conceivable with conversational social business. Which is an extravagant method of saying deepfakes that make like recorded figures will presumably be attempting to sell you pizza soon enough, as industry watchers have perceptively warned.

The startup additionally says it sees instructive potential in bringing celebrated, since a long time ago perished figures to intuitive “life”.

Or, indeed, a fake estimation of it — the “life” being simply virtual and Digital Einstein’s voice not being an unadulterated tech-fueled clone either; Alforithmic says it likewise worked with an entertainer to do voice demonstrating for the chatbot (in light of the fact that by what other means was it going to persuade Digital Einstein to have the option to say words the genuine article could never at any point have longed for saying — like, er, “blockchain”?). So there’s somewhat more than AI guile going on here too.

“This is the following achievement in exhibiting the innovation to make conversational social business conceivable,” Alforithmic’s COO Matt Lehmann advised us. “There are even more than one defects to resolve just as tech difficulties to defeat yet generally we think this is a decent method to show where this is moving to.”

In a blog entry examining how it reproduced Einstein’s voice the startup expounds on progress it made on one trying component related with the chatbot form — saying it had the option to shrivel the reaction time between pivoting input text from the computational information motor to its API having the option to deliver a voiced reaction, down from an underlying 12 seconds to under three (which it names “close continuous”). Yet, it’s still a sufficient slack to guarantee the bot can’t escape from being a piece tedious.

Laws that ensure individuals’ information and additionally picture, in the mean time, present a lawful or potentially moral test to making such “computerized clones” of living people — at any rate not without asking (and in all probability paying) first.

Of course recorded figures aren’t around to pose off-kilter inquiries about the morals of their similarity being appropriated for selling stuff (if just the cloning innovation itself, at this beginning stage). In spite of the fact that authorizing rights may in any case apply — and do truth be told on account of Einstein.

“His rights lie with the Hebrew University of Jerusalem who is an accomplice in this venture,” says Lehmann, before ‘fessing up to the craftsman permit component of the Einstein “voice cloning” execution. “Truth be told, we really didn’t clone Einstein’s voice as such yet discovered motivation in unique accounts just as in films. The voice entertainer who helped us demonstrating his voice is a gigantic admirer himself and his exhibition spellbound the character Einstein quite well, we thought.”

Turns out reality with regards to innovative “lies” is itself somewhat of a layer cake. However, with deepfakes it’s not the complexity of the innovation that matters to such an extent as the effect the substance has — and that is continually going to rely on setting. Also, anyway well (or gravely) the faking is done, how individuals react to what they see and hear can move the entire account — from a positive story (imaginative/instructive blended media) to something profoundly negative (disturbing, deceiving deepfakes).

Concern about the potential for deepfakes to turn into an apparatus for disinformation is rising, as well, as the tech gets more modern — assisting with driving advances toward controlling AI in Europe, where the two primary substances answerable for “Computerized Einstein” are based.

Earlier this week a spilled draft of an approaching authoritative proposition on container EU rules for “high danger” uses of man-made reasoning incorporated a few segments explicitly focused at deepfakes.

Under the arrangement, officials look set to propose “orchestrated straightforwardness rules” for AI frameworks that are intended to associate with people and those used to produce or control picture, sound or video content. So a future Digital Einstein chatbot (or attempt to sell something) is probably going to have to unequivocally pronounce itself counterfeit before it begins faking it — to stay away from the requirement for web clients to need to apply a virtual Voight-Kampff test.

For now, however, the scholarly sounding intelligent Digital Einstein chatbot still has a sufficient slack to part with the game. Its creators are likewise plainly naming their creation with expectations of selling their vision of AI-driven social trade to other businesses.